There should be a grave yard for blog posts that start, but never get published. Fortunately, I have this site. Here I can share with you my failed posts. Get ready.

It starts with this epic video from the Soyuz MS-10 failed launch.

That’s pretty awesome. It’s doubly awesome that the astronauts survived.

Ok, so what is the blog post? The idea is to use video analysis to track the angular size of stuff on the ground and from that get the vertical position of the rocket as a function of time. It’s not completely trivial, but it’s fun. Also, it’s a big news event, so I could get a little traffic boost from that.

How do you get the position data? Here are the steps (along with some problems).

The key idea is the relationship between angular size, actual size and distance. If the angular size is measured in radians (as it should be), the following is true where L is the length (actual length), theta is the angular size, and r is the distance.

Problem number 1 – find the actual distance of stuff on the ground. This is sort of fun. You can get snoop around with Google maps until you find stuff. I started by googling the launch site. The first place I found wasn’t it. Then after some more searching, I found Gagarin’s Start. That’s the place. Oh, Google maps lets you measure the size of stuff. Super useful.

Finding the angular size is a little bit more difficult. I can use video analysis to mark the location of stuff (I use Tracker Video Analysis because it’s both free and awesome). However, to get the angular distance between two points I need to know the angular field of view—the angular size of the whole camera view. This usually depends on the camera, which I don’t know.

How do you find the angular field of view for the camera? One option is to start with a known distance and a known object. Suppose I start off with the base of the Soyuz rocket. If I know the size of the bottom thruster and the distance to the thruster, I can calculate the correct angular size and use that value to scale the video. But I don’t the exact location of the camera. I could only guess.

As Yoda says, “there is another”. OK, he was talking about another person that could become a Jedi (Leia)—but it’s the same idea here. The other way to get position time data from some other source and then match that up to the position-time data from the angular size. Oh, I’m in luck. Here is another video.

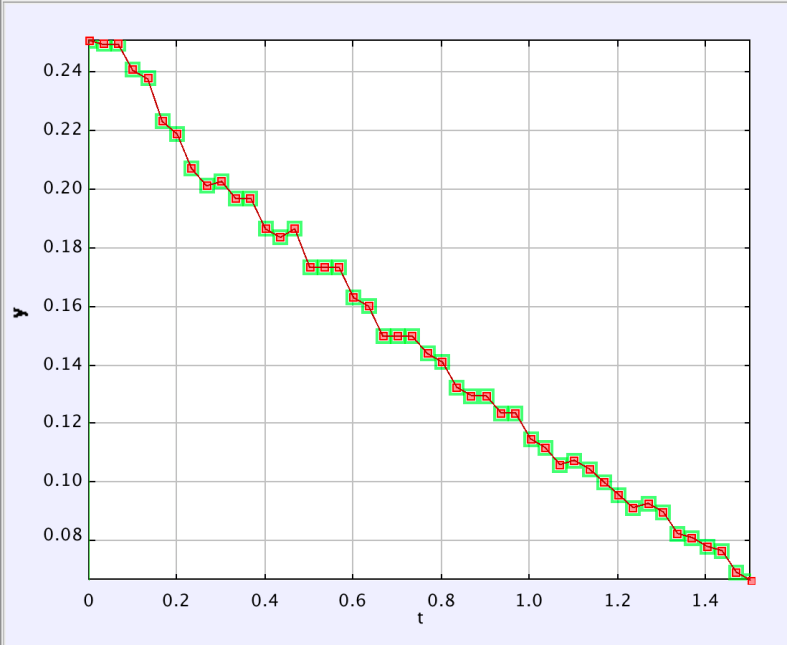

This video shows the same launch from the side. I can use normal video analysis in this case to get the position as a function of time. I just need to scale the video in terms of size. Assuming this site is legit, I have the dimensions of a Soyuz rocket. Boom, that’s it (oh, I need to correct for the motion of the camera—but that’s not too difficult). Here is the plot of vertical position as a function of time.

Yes, that does indeed look like a parabola—which indicates that it has a constant acceleration (at least for this first part of the flight). The term in front of t2 is 1.73 m/s2 which is half of the acceleration. This puts the launch acceleration at around 2.46 m/s2. Oh, that’s not good. Not nearly good enough. I’m pretty sure a rocket has an acceleration of at least around 3 g’s—this isn’t even 1 g. I’m not sure what went wrong.

OK, one problem won’t stop me. Let’s just go to the other video and see what we can get. Here is what the data looks like for a position of one object on the ground.

You might not see the problem (but it sticks out when you are doing an analysis). Notice the position stays at the same value for multiple time steps? This is because the video was edited and exported to some non-native frame rate. What happens is that you get repeating frames. You can see this if you step through the video frame by frame.

It was at this point that I said “oh, forget it”. Maybe it would turn out ok, but it was going to be a lot of work. Not only would I still have to figure out the angular field of view for the camera, but I need to export the data for two points on the ground to a spreadsheet so that I can find the absolute distance between them (essentially using the magnitude of the vector from point A to point B). Oh, but that’s not all. When the rocket gets high enough, the object I was using is too small to see. I need to switch to a larger object.

Finally, as the rocket turns to enter low Earth orbit, it no longer points straight up. The stuff in the camera is much farther away than the altitude of the rocket.

OK, that’s no excuse. I should have kept calm and carried on. But I bailed. The Soyuz booster failure was quite some time ago and this video analysis wouldn’t really add much to the story. It’s still a cool analysis—I’ve started it here so you can finish it for homework.

Also, you can see what happens when I kill a post (honestly, this doesn’t happen very often).

Actually, there is one other reason to not continue with this analysis. I have another blog post that I’m working that deals with angular size (ok, I haven’t started it—but I promise I will). That post will be much better and I didn’t want two angular size posts close together.

The end.